Those of you with eagle eyes will have noticed the ’user researcher’ role in the Government Digital and Data Profession Capability Framework, published by the Central Digital and Data Office (CDDO), has recently been updated. This blog post shares some of the background and thinking behind the changes, and highlights some of the things it means for user researchers working in government.

A bit of history

It’s been a long and collaborative journey to update the framework, and has involved a myriad of people in the cross-government user research (UR) community since 2019.

The Government Digital and Data Profession Capability Framework was first published in 2017 to provide a common understanding of the different digital, data and technology roles in government. ‘User researcher’ is part of the ‘user-centred design’ group of roles in the framework.

An initial review of the role in 2019 suggested it would benefit from some iteration. Starting with a review of the old framework, a working group identified strengths and gaps, drawing on insights from across departments. Later, an experimental version was piloted at the Department for Business and Trade (DBT), refined with input from community consultations and CDDO.

The updated role now live on the framework reflects the collective efforts of many, showcasing a true cross-government collaboration, with notable thanks to:

- Jo Granton, DBT’s Head of Capability

- Sara Woodman at HM Revenue & Customs (HMRC)

- CDDO Capability team, including Senior Content Designer, Antony Poveda, and Head of User Research, Louise Petre

What problems were we trying to solve

As we started to develop a new iteration of the role, based on the discovery work we’d done, three key issues emerged.

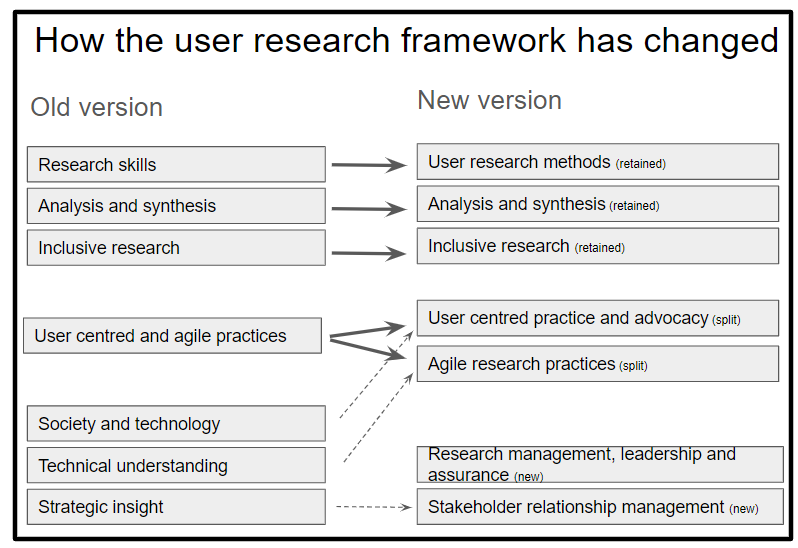

1. More focus on advocating for users

In the old version of the role, there was a skill called ’user-centred and agile practices’. But while it’s important we conduct research in a user centred way, there’s not much point to that if our research doesn’t make a difference. So it’s not just about conducting the research, a key part of our role is using it to advocate on behalf of the user - but this was missing.

2. More focus on agile

Similarly, combining user-centred and agile practices into a single skill meant it didn’t do enough to describe the skills we need for effective agile working.

The way we conduct research is quite different to, say, academic researchers. For our work to have impact, we need to deliver insights at a depth and cadence that will support agile delivery teams. Including this as a separate skill in the new role should mean we spend more time developing these skills in the researchers we manage.

3. More focus on stakeholder management and research leadership

We had identified a common scenario for senior user researchers looking for promotion. They would have all the UR ‘craft’ skills, but their manager felt they weren’t ready to step up to lead user researcher.

Often the issue was, while their research skills were strong, they hadn’t developed the stakeholder and leadership skills that leads and heads of user research depend on. The problem here was that these skills weren’t reflected in the capability framework - and so we weren’t doing enough to help people develop these skills early in their career.

What’s new?

With this analysis in mind, the new user researcher role:

- retains the three skills reflecting the core research skills

- splits being user centred and agile into two skills

- adds two new skills reflecting the stakeholder and research leadership skills

- retires three of the less useful skills, incorporating the key points in the new skills (so that the overall number of capabilities remains unchanged)

Here is the new list of skills for the role::

- user research methods

- analysis and synthesis

- inclusive research

- user-centred practice and advocacy

- agile research practices

- research management and leadership

- stakeholder relationship management

This new iteration of the framework doesn’t contain much that’s radically new - good user researchers are doing all this already. But the point is these are key skills for all of us. So hopefully the update of the capability framework will encourage us all to look at these as we reflect on our practice and develop our user research careers.

What next

Departments will be rolling out the new role at their own pace, but please take the time to look at the skills,especially those that appear in the role for the first time.

And look at the Capability Framework for a fuller definition of what’s expected at each seniority level.

- as an early career user researcher , ask yourself if you’re getting the opportunity to develop these skills

- as a line manager, ask yourself how you can support your researchers learn these skills

- as a community, ask yourselves what we need to put in place to make sure everyone has the chance to build these skills - especially those that appear here for the first time

And lastly, this has been through a big collaborative and consultative process - but it can always be improved. If you have any comments and reflections, please leave them below.

]]>

This is the second of 2 blog posts.

This is the second of 2 blog posts.