On the Carer’s Allowance exemplar, we wanted to make sure that our service worked for as many people as possible, so we recruited a wide range of users.

We also wanted to make sure that we understood the assisted digital needs of those who can’t use the digital service, so we recruited less digitally confident and skilled participants.

In this post I’ll share what we learned about writing a recruitment brief for participants with low and no digital skills, and what worked and what didn’t.

Recruitment questions: we tried many ways of asking them

We write our own recruitment briefs, and we work with our lab in Manchester to recruit. We’ve tried many ways of asking questions to ensure we recruit the right people.

We’ve found that asking questions such as...

- How many hours do you spend on the internet per week?

- If you needed to claim a benefit, how would you prefer to do it:

- Online

- Paper form

- Telephone

- Face to face

- How would you rate your computer skills?

...simply weren’t getting us the right people.

We’ve spent time with participants who prefer paper forms and use the internet a couple of hours a week, but completed an online Carer's Allowance claim with little difficulty.

The problem seems to be this: people generally rate themselves as lower - often significantly lower - in digital literacy than they actually are.

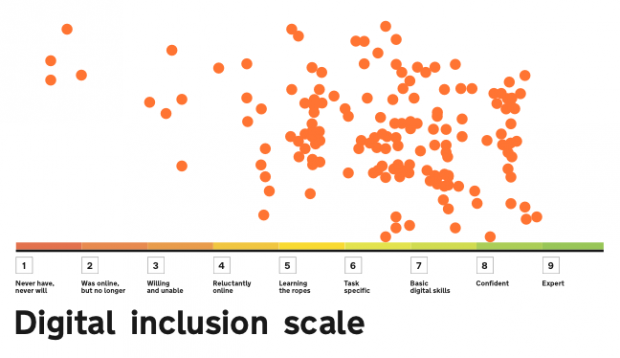

We needed to recruit people lower on the scale

We used the digital inclusion scale to keep track of the digital literacy of our participants. From this, we saw that we needed to recruit people lower on the scale.

We looked to Go ON UK’s definition of ‘Basic Digital Skills’ to help us phrase our recruitment briefs better.

Note: Go ON UK have recently redesigned their advice. This was what we found and used at the time. We still think it's useful.

| Skills | Communicate | Find things | Share personal information |

|---|---|---|---|

| Activity | Send and receive emails | Use search engine / Browse the internet | Fill out an online form:

|

| Underpinned by: Keeping safe online | Identify and delete spam | Identify which websites to trust | Evaluate which websites to trust / Set privacy settings |

We asked different questions

As a result of our research, we decided to try the following questions, and they worked much better.

Please score yourself on how comfortable/able you are doing the following tasks online, where the scoring is:

0 = Can't do/don't know what it is

1 = Would need help to do

2 = Could do with difficulty

3 = Could do

4 = Expert (could teach others)

| 1 | 2 | 3 | 4 | |

|---|---|---|---|---|

| Send an email | ||||

| Delete spam emails | ||||

| Find stuff using a search engine such as Google | ||||

| Watch a video on YouTube or iPlayer | ||||

| Fill out an application form or buy something online | ||||

| Use a mobile app | ||||

| Evaluate whether a website is safe/can be trusted |

How this helped

This helped us recruit research participants who had exactly the level of skill we were looking for. It removed the ambiguity of what being 'good at using the internet' means, and allowed potential participants a more objective way of rating themselves.

Who we now recruit

We now tend to recruit a mix of people: we request two people who score 15 and above, and just one person who scores 22 and above. This gives us a mix of internet ability and a range of views.

I expect this approach could be used by other services with a minimum of difficulty. It continues to work well for us. I’d be interested in hearing your thoughts - there's always space for improvement.

Keep in touch. Sign up to email updates from this blog. Follow Simon on Twitter.

13 comments

Comment by Sainkho posted on

I'm curious about the qualification on 'Expert' - 'could teach others' and why you thought it necessary to qualify it.

Not all who are expert at something desire to share and educate. So might that not put some off selecting that option?

Comment by Simon Hurst posted on

Hi Sainkho and thanks for the comment. It was more to attempt to give some clarity for the person being asked as to the kind of ability level we though this covered.

I think you raise a good point and one I'd certainly watch out for when using this in the future. We had a brilliant relationship with our recruiter and we always discuss changes we make to our briefs. They've not made us aware of any issue and we've been using this approach for months (research every two weeks). It was actually partly through their feedback we changed it in the first place, they too recognised that we weren't really getting the kind of candidates we were after.

Comment by Ian posted on

It does also say 'could' share with others, giving them the option to share or not. but they have the expertise to share if they chose.

Comment by Lou posted on

Excellent post. I think those questions make more sense, and are in any case much like the type of questions we'd be asking in other sections on a screener. I did a rough calc to score my mum (78, carer) and she came out with a 9, which is as it should be: I fill out all online forms for her. I will be pinching this for my own use. Thanks 🙂

Comment by Jess G posted on

A great post & fantastic screener which I may be pinching / using in my research! Thanks Simon

Comment by Brenda G posted on

A great post. I will also be using data on such research when considering how the assisted digital users needs can be met. Thanks.

Comment by Lisa Halabi posted on

Great post, thank you for sharing. This is a great initiative. Quick question, you write "we now tend to recruit a mix of people: we request two people who score 15 and above and just one person who scores 22 and above", are these 2 people the ones you define as "low digital skills"? If not, what score defines low digital skill to you? Many thanks.

Comment by Simon Hurst posted on

Hi Lisa, thanks for the kind comments. I still use this approach and 15 feels about right to me. It's not an exact science, just a good indicator.

Hope it proves useful to you.

Comment by Fia posted on

Very interesting, thanks for sharing! I'd also be interested in hearing how you actually recruited the users. Did you use an agency or did you reach out via other channels?

Comment by Simon Hurst posted on

Hi Fia- I tend to use agencies for something like this. On the project I'm working on now we recruit a lot through the third sector but agency recruitment allows us to be really specific about the kind of person we get.

Comment by Evy posted on

I really like this new way of asking questions. I will have no difficulties answering the first set of questions but the new style felt more natural and it was quicker for me to decide what scoring to select. So it also benefit people with higher digital skills. Great work!

Comment by David Murphy posted on

Fascinating article, how has it worked out in practice? (I notice the article is about two years old, so time to trial quite widely I would guess)

Comment by Hayley posted on

I've been using this for recruitment at nhs.uk and it's worked well. It would be useful to relate each score to a scale so that people know what the numbers mean, i.e. scores between 15 and 20 = basic digital literacy.

When I communicate this to colleagues without a related scale people generally understand lower numbers mean lower digital literacy.